Forecasting: from Statistical to Machine Learning models

Forecasting is crucial for strategic planning, but selecting the most suitable forecasting method often presents a challenge. Different techniques offer varying benefits and drawbacks, making the choice unclear without proper evaluation. By systematically reviewing forecasting methods—from basic approaches such as Naïve and Moving Average to advanced statistical models like ARIMA, SARIMA, and modern machine learning methods—this analysis provides clarity on which methods align best with specific data characteristics, performance needs, and practical considerations.

The Complexity of Forecasting Choices

Choosing an effective forecasting method can be difficult due to the variety and complexity of available techniques. Incorrectly matched methods can produce unreliable predictions, causing misguided decisions and lost opportunities. Often, sophisticated techniques provide greater accuracy but at the cost of complexity and higher susceptibility to overfitting, especially when data availability is limited. Without understanding these trade-offs clearly, the forecasting process becomes inefficient and uncertain.

Systematic Evaluation of Forecasting Methods

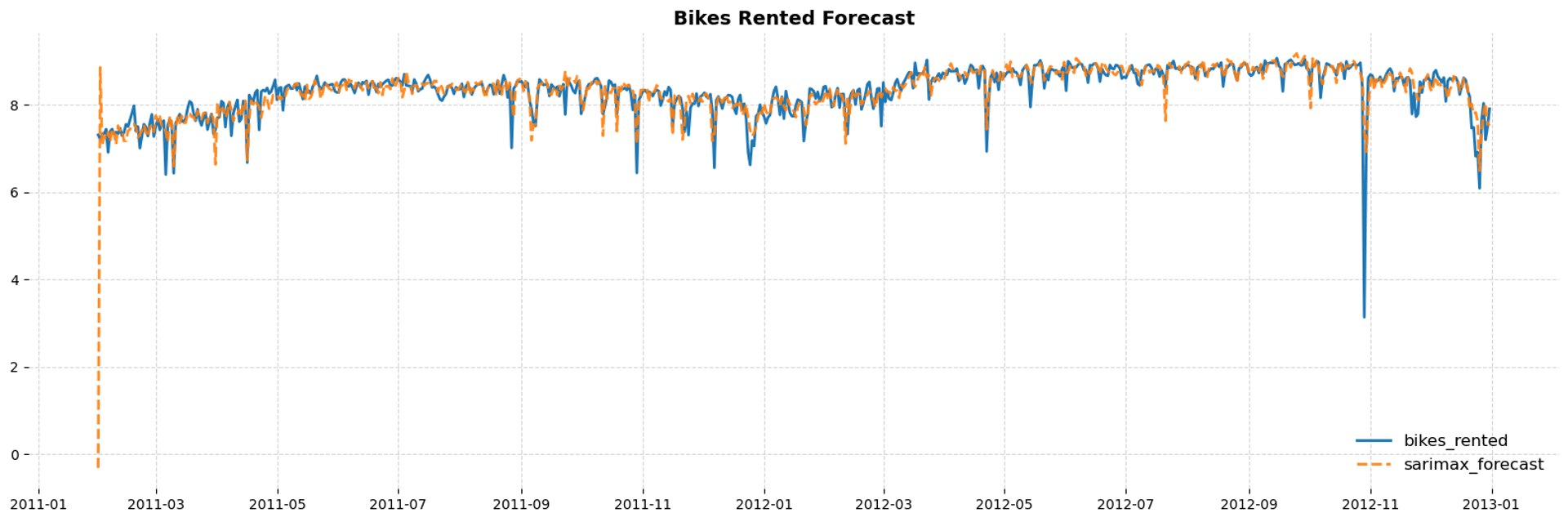

A comparative analysis allows clear insight into the strengths, limitations, and suitable contexts of various forecasting techniques. Starting with simple baseline models—Naïve and Moving Average—to set initial benchmarks, and incrementally evaluating more sophisticated models, including statistical approaches like ARIMA and SARIMA, multivariate techniques like VARX and SARIMAX, and advanced machine learning algorithms such as Random Forest, XGBoost, and CatBoost. Each method undergoes rigorous validation to highlight its predictive reliability, ease of use, and practical value, revealing the optimal balance between simplicity, interpretability, and precision.

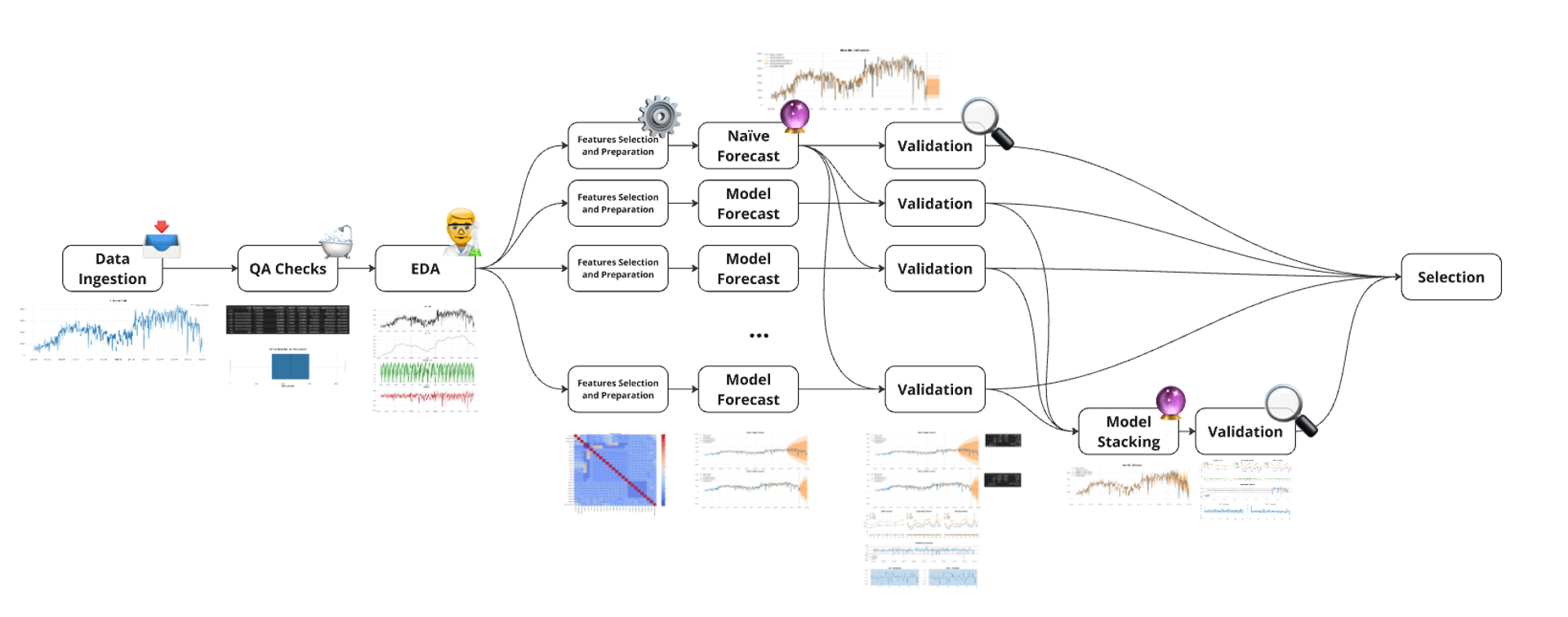

How It Works

Comprehensive Data Analysis: Initial exploration and thorough data cleaning help identify critical trends, seasonality, outliers, and stationarity within the dataset, laying the groundwork for effective forecasting evaluation.

Structured Model Comparison: Incremental evaluation from baseline approaches (Naïve, Moving Average) through statistical models (ARIMA, SARIMA) and sophisticated machine learning methods (XGBoost, CatBoost), systematically comparing their forecasting performance.

Robust Validation Procedures: Employing extensive validation metrics (RMSE, MAE, SMAPE, MASE), residual diagnostics, and window-based validation (rolling, expanding, and walk-forward) ensures comprehensive insights into model reliability and limitations.

Clear Insights on Model Trade-offs: Analysis results explicitly highlight the trade-offs between complexity, accuracy, interpretability, and overfitting risk, providing actionable guidance for selecting the most suitable forecasting technique for each scenario.